ROBOTICS

FileMarket AI Data Labs

Get Paid to Train Robots with Your Actions & Voice.

Join the dedicated robotics data collection platform. Provide speech and egocentric data for Physical AI and earn rewards for every successful session.

Start Earning NowSearching for high-paying tasks...

Human Perception

Recognition Lab

INIT_PROTOCOL: VISION_RECOGNITION

SOURCE: EGOCENTRIC_FACTORY

DATA: SKELETON_PERCEPTION_SYNC

Vision Lab

Egocentric Motion

Active

High-fidelity 6-DOF tracking for humanoid manipulation and environment mapping.

Recognition

Human Perception

Active

AI models for skeletal posture estimation, intent prediction, and facial biometric analysis.

Audio Hub

Dialect Synthesis

Active

Multi-lingual speech datasets used in LLM voice generation and emotional recognition.

Sensor Array

Tactile Pressure

Active

Real-time pressure mapping for robotic hand manipulation and force feedback.

Kinematics

Skeletal Mapping

Active

Full-body human motion capture for humanoid robot imitation learning.

Protocol

AES-256 Pipeline

Active

Military-grade encrypted data streaming for top-tier robotics labs and researchers.

THE DATA

FACTORY.

We run an egocentric human-motion data factory with staged environments for embodied video.

400k+

Samples Validated

24/7

Lab Capture

High Fidelity

Zero-loss sensor capture pipelines.

Case Studies

Proven results in Human-Robot interaction.

Hardware Prototyping

Engineering Lab

INIT_PROTOCOL: HARDWARE_BUILD

SOURCE: ROBOTICS_TEAM_X1

DATA: ACTUATOR_STRESS_TEST

Internal Robotics Team

We dont just use AI; we build the robots that power it. Our in-house engineering team prototypes custom robotic skeletons and actuators to push the boundaries of embodied intelligence.

In-House Manufacturing

Rapid Build Cycles

Multidisciplinary Team

Our Own Hardware

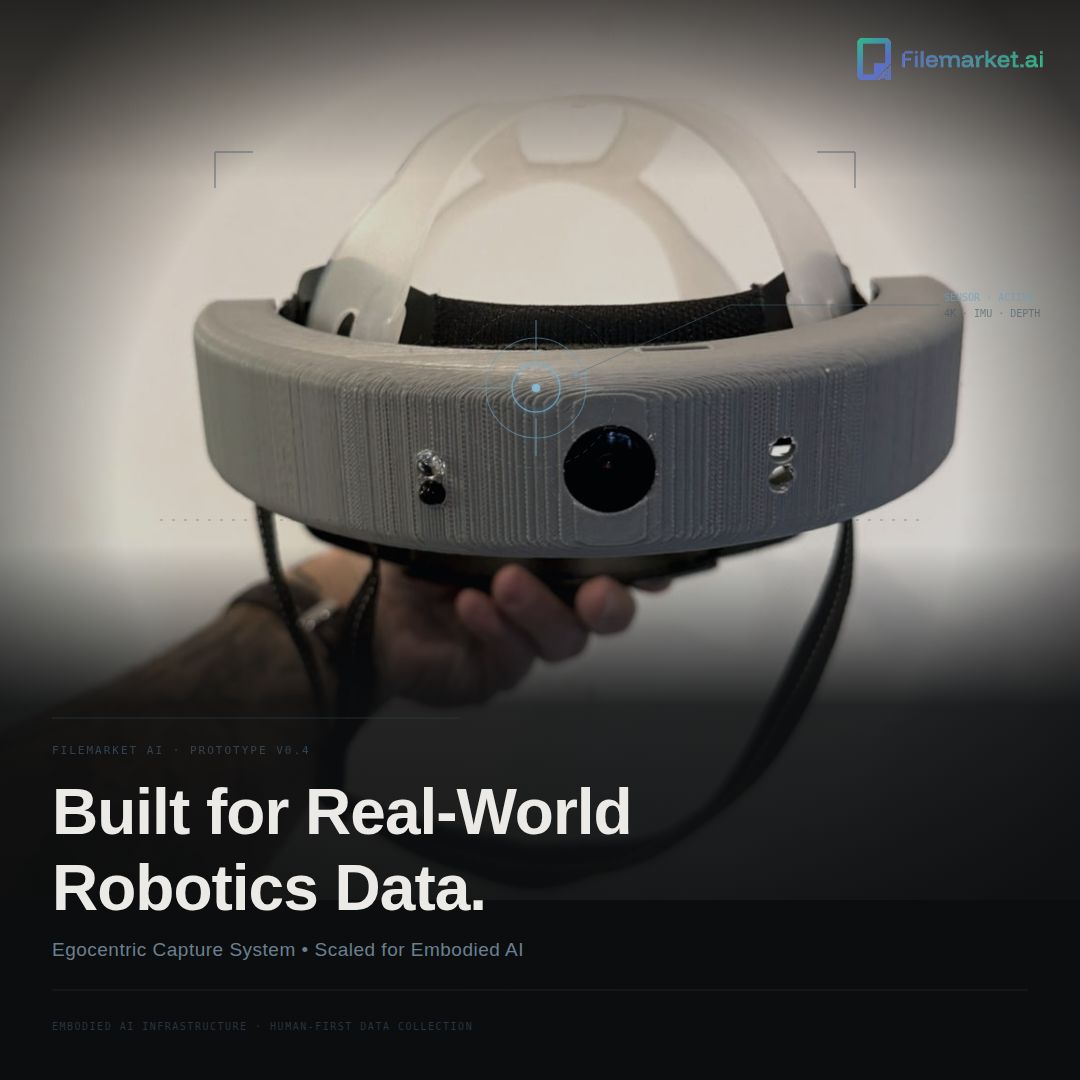

Data Acquisition Unit

PRODUCT_ID: EDCU-1_PROTO

BUILD: IN-HOUSE_SQUAD_01

STATUS: ACTIVE_DEPLOYMENT

Built for Robotics Data.

Off-the-shelf hardware isnt enough for the next generation of embodied AI. We engineered the **EDCU-1**—our own proprietary data collection headset—to capture high-fidelity human motion and egocentric vision that standard cameras miss.

Custom PCB Design

Proprietary Firmware

Hardware Specifications: